If you’ve been analyzing server hardware as long as we have, you know 2026 isn't just another refresh cycle, it’s a complete market fragmentation. The "best" GPU is no longer just the most expensive one; it’s the one that handles your specific workload without blowing up your budget.

Whether you are a CTO at a scaling AI startup in Bengaluru or running a render farm in Los Angeles, choosing between consumer grade powerhouses and data center titans is tougher than ever.

At ProX PC, we are breaking this down. We are comparing the disruptive NVIDIA RTX 5090, the versatile RTX Pro 6000 (Blackwell), and the enterprise standards, H100 & H200. We will analyze critical ROI, VRAM bottlenecks, and which setup you need to keep these beasts cool.

1. The GPU with Best ROI: NVIDIA RTX 5090

Best For: Rendering Farms, Inference LLMs, Visual Effects, Startups.

The RTX 5090 changes the math for high-performance computing. With the Blackwell architecture trickling down to the consumer line, you get absurd raw compute performance for a fraction of the cost of enterprise cards.

- The Sweet Spot: For pure floating-point performance per dollar, nothing beats this card. If your workload (like Redshift, Octane, or V-Ray) scales with CUDA core count rather than VRAM capacity, stacking 5090s is the smartest financial move we can recommend.

- The Challenge: It’s an active-cooled card. You cannot put multiple of these into a standard server; they will overheat. They need specific airflow channels.

The Solution: Pro Maestro GE A (Active GPU Support)

We designed the Pro Maestro GE (8 GPU Server) specifically to handle the thermal output of high-wattage active cards.

- Capacity: Supports up to 8x NVIDIA RTX 5090 GPUs.

- Why it wins: You get near-H100 aggregate compute for rendering tasks at roughly 20% of the hardware cost. For creative studios and early-stage AI startups running inference on quantized models, this server offers the best ROI in the market.

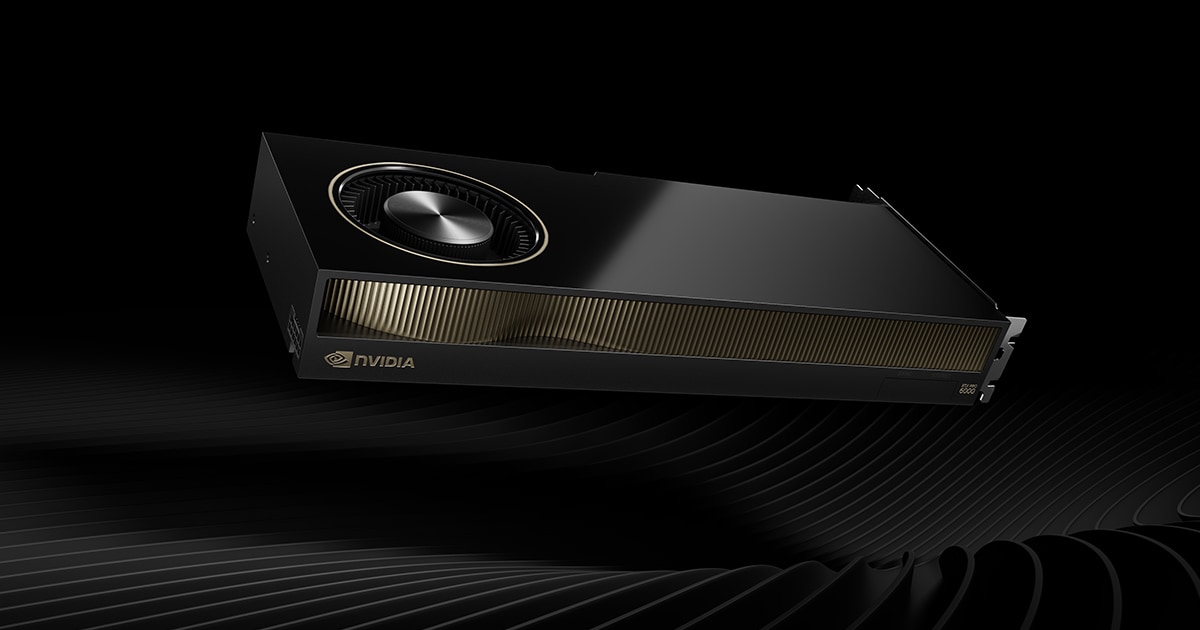

2. The Professional Bridge: NVIDIA RTX Pro 6000 (Blackwell)

Best For: High-End Workstations, Digital Twins, Industrial Metaverse, Heavy GenAI Fine-Tuning.

For serious professional workloads, the Pro 6000 Blackwell stands out because it offers the maximum VRAM for the price in a single GPU.

If you are a solo researcher, a data scientist, or a studio that needs a massive memory buffer but cannot justify the cost of an H100, this is your card. You get 96GB of GDDR7 memory triple the 5090's capacity at a price point that makes it the undisputed value leader for high memory workloads.

It's not just one card, though it's a platform available in three distinct variants, and choosing the wrong one can limit your deployment.

- Workstation Edition (Active): The standard dual-slot active card for desktops.

- Workstation Max-Q (Active): Optimized for lower power and higher density in workstations.

- Server Edition (Passive): Designed purely for rack-mount airflow.

Which Setup Fits Your Variant?

We have mapped these variants to specific setups to ensure you get maximum performance:

This is our "Active" optimized setup. It provides the physical spacing and airflow required for the active fans on the Workstation and Max-Q cards to breathe. It gives your team massive local compute but with big form factor.

If you need a compact, high-performance node for inference or design, the GQ P handles four passive Pro 6000s effortlessly. It relies on the server's high-static pressure fans to cool the cards, keeping the form factor dense and efficient.

- For Server (Passive) Cards - 10 GPU Config: Choose the Pro Maestro GD.

When you need maximum density, the Pro Maestro GD is the best possible 10 GPU server platform we offer in India. It transforms the Pro 6000 Server Edition into a supercomputing cluster, allowing you to fit nearly 1TB of VRAM in a single node.

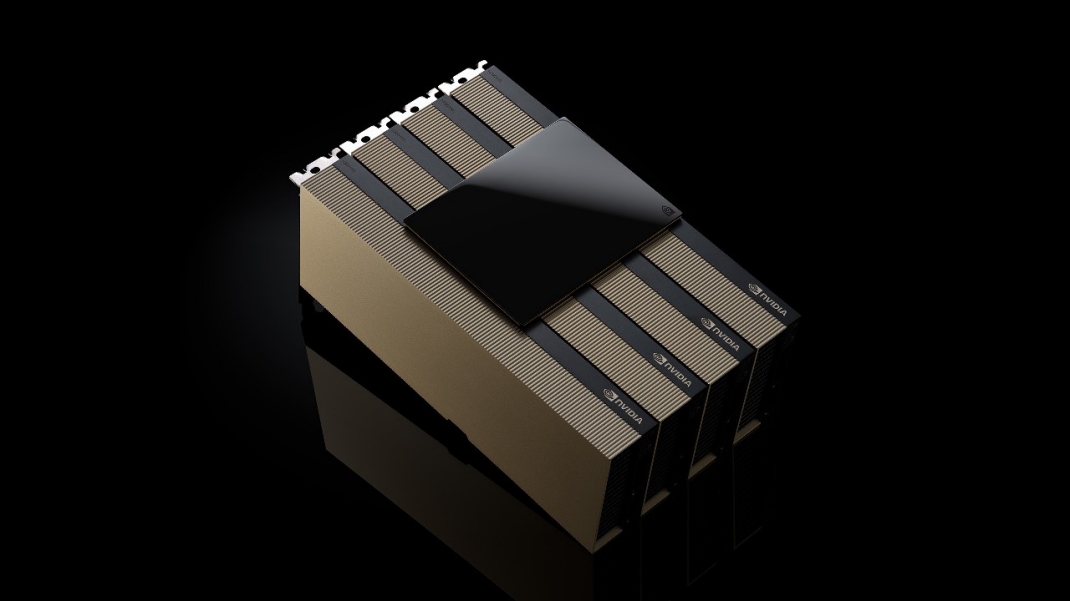

3. The Data Center Titans: NVIDIA H100 & H200

Best For: LLM Training, Cloud Service Providers, National Supercomputing, Heavy RAG Pipelines.

When "time-to-train" matters more than budget, you buy Hopper. The H100 is the industry standard, but the H200 is the bandwidth leader.

- H100 vs. H200: The H100 comes with 80GB HBM3. The H200 pushes this to 141GB HBM3e. That "e" stands for evolved, but practically it means speed. The memory bandwidth is massive (4.8 TB/s), allowing you to feed the tensor cores faster than ever.

- The Reality: These are passive cards. They rely entirely on the server setup to push air through them with high-static pressure fans. If you put these in a standard case, they will throttle instantly.

The Solution: Pro Maestro GD (10 GPU server)

You don't buy an H200; you buy a platform. The Pro Maestro GD is our heavy lifter for this class.

- Capacity: Supports up to 10x NVIDIA H200 or H100 GPUs.

- Architecture: For LLM training, interconnect speed is everything. The Pro Maestro GD optimizes high density compute in a single machine.

Final Recommendation

At ProX PC, we believe in matching the hardware to the bottleneck.

- Go with Pro Maestro GE (8x 5090) if you are a media house, render farm, or AI startup. We can help you achieve the fastest render times and blazing fast inference for the lowest price point.

- Go with Pro Maestro GQ P (4x Pro 6000 Server) for compact, reliable inference nodes in a rack environment.

- Go with Pro Maestro GD (H200) if you are a Data Center or building Foundational Models. When you need to train a model with trillions of parameters, there is no substitute for HBM3e memory and server-grade scalability.

We don't just sell boxes; we architect solutions. Whether you need the brute force of the 5090 or the precision of the H200, we have the platform ready to deploy.

Pro Maestro GQ P

(4 GPU Server)

View

Pro Maestro GE A

(8 GPU Server)

View

Pro Maestro GD

(10 GPU Server)

View